From Fine Dining to Energy, The Hidden Constraint Limiting AI at Scale

My wife and I were watching Top Chef the other night, and not for the drama, but for how everything actually works.

If you watch closely, you start to notice something different. It’s not just the food. It’s the timing, the communication, the way one chef adjusts while another plates, and how someone catches a mistake before it leaves the kitchen. What looks smooth on the surface is really a constant stream of coordination happening in the background.

That coordination is the system.

And it works, up to a point.

Fine dining depends on that kind of human coordination. That’s what creates the experience, but it’s also what limits it. It doesn’t scale well. When labor tightens, when wages rise, or when experienced staff leave, the system starts to strain. We saw that after COVID. It wasn’t that the craft disappeared, it was that the coordination layer got thinner.

You see the same pattern in places that matter a lot more than dinner.

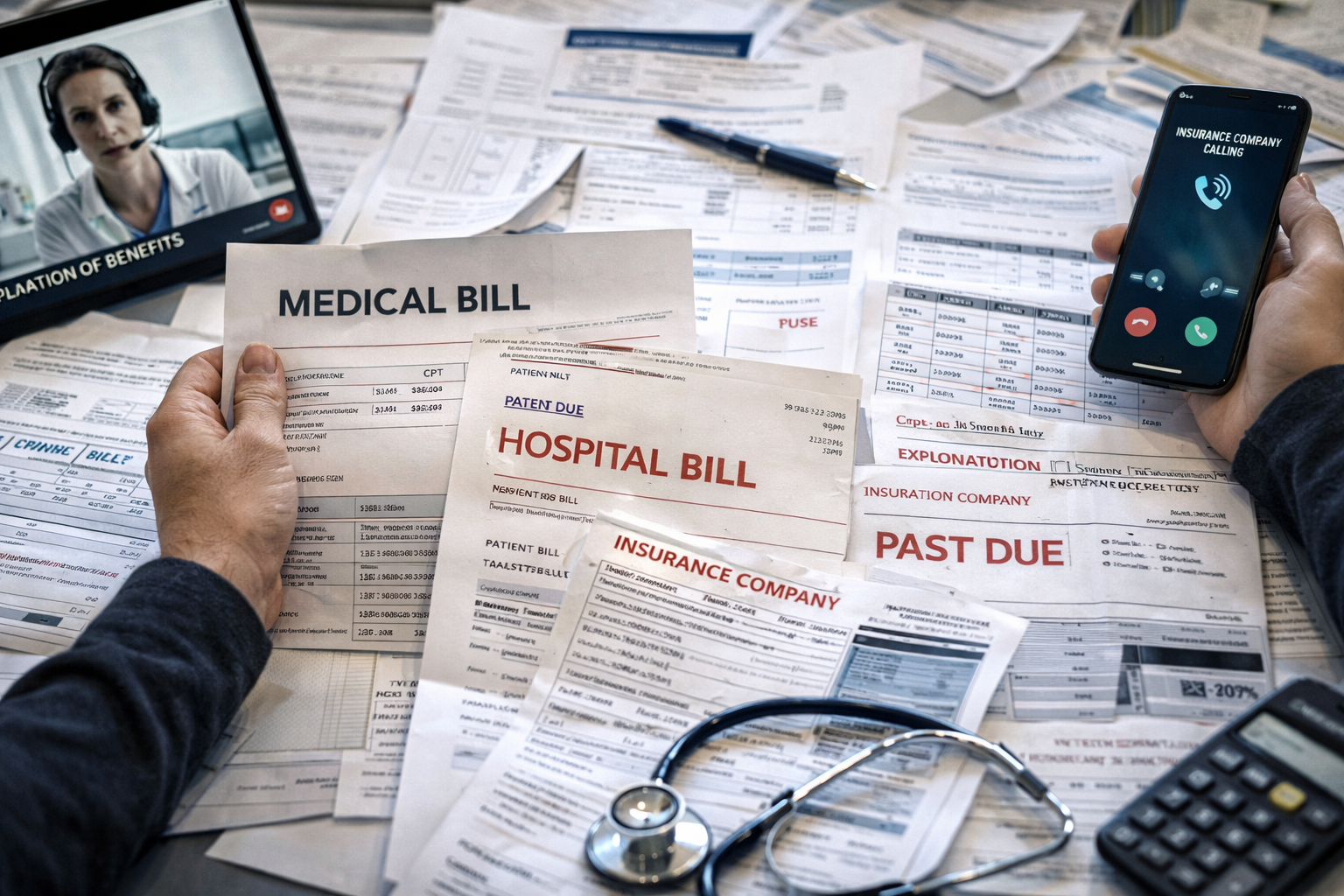

In healthcare, if you’ve ever dealt with a hospital bill or helped a family member navigate care, you’ve probably experienced this firsthand. A large part of the experience has nothing to do with care itself. It has to do with everything around it, including scheduling, approvals, coding, billing, and the back-and-forth with insurance.

You go in for one issue and end up with multiple bills, different codes, and a series of phone calls where each person can only see part of what’s going on. That isn’t random, and it isn’t just inefficiency. It’s how the system currently operates.

There are rules for what gets covered, how something is coded, what requires pre-approval, and how claims are submitted. But those rules don’t execute cleanly across systems, so they end up being interpreted over and over again by different people at different steps.

As a result, people step in and connect everything. They reconcile what happened with what the insurer expects, fix mismatches, resubmit claims, and explain outcomes that the system itself can’t clearly explain.

Estimates from groups like McKinsey & Company consistently suggest that administrative overhead is roughly a quarter of total healthcare spending. That number is widely cited. The interpretation here is that a meaningful portion of that overhead comes from coordination rather than care.

Over time, entire industries adapted around that model. In healthcare, billing complexity became a profit extraction mechanism. The fragmentation between providers, insurers, and systems creates room for interpretation, delay, and rework, and those costs don’t disappear. They get pushed onto the patient.

That shows up as higher bills, but also as friction that delays care. Approvals take longer. Issues require follow-up. Treatment gets deferred while the system catches up. The result is not just higher cost. It is worse outcomes.

Energy has already run into this issue, and the consequences have been real.

The grid used to be more predictable, but that is changing. There is more distributed generation, more variability in demand, and more real-time decisions that have to be made continuously. At the same time, the system still has to operate within strict rules around reliability, markets, and regulation.

At the same time, demand is increasing in new ways. AI-driven data centers are putting additional pressure on the grid, requiring more power, more consistency, and more real-time coordination than the system was originally designed to handle.

Today, much of that coordination happens across separate systems and teams. Operators monitor what is happening, make adjustments, and keep the system within bounds.

That approach works at a certain level of complexity. However, as the system becomes more dynamic, the burden on human coordination increases. The inference here, and I’m stating it as such, is that this creates risk because the system is asking people to manage more moving parts than can realistically be coordinated in real time.

We’ve already seen what happens when that coordination breaks down. Events like the Texas winter storm outages exposed how fragile the system can be under stress. When coordination fails in that environment, it’s not just inefficiency. It can result in large-scale outages and, in extreme cases, loss of life.

Research from firms like Gartner points to a related issue. You can automate individual tasks, but if coordination across systems remains manual, you eventually hit a ceiling. The bottleneck shifts from execution to alignment.

If you strip all of this down, the pattern looks pretty consistent.

The business owns part of the process, the systems live somewhere else, the rules and definitions sit in between, and the coordination ends up happening in people.

What that means in practice is that nothing is holding the system together in a consistent, system-driven way. People step in and do that work manually. They connect the dots, interpret the rules, and keep things moving when the system itself can’t.

For a long time, that worked well enough. Not because it was efficient, but because it was flexible. When systems didn’t line up, people could adjust. When rules conflicted, someone could reconcile them.

Over time, entire industries adapted around that model. In healthcare, billing complexity became a profit extraction mechanism. In large organizations, teams, systems, and metrics drift apart, and people become the layer that reconciles everything and keeps it moving.

The coordination never disappeared. It just became less visible.

AI is turning that invisible coordination into a hard constraint.

What’s changing now is not just scale, but speed and interdependence.

AI increases the number of decisions being made, the number of actions being triggered, and the number of systems that need to stay aligned at the same time. Things that used to happen one after another now happen simultaneously.

At the same time, organizations are running into a different but related constraint. Research from Accenture shows that nearly half of executives report they don’t have enough high-quality data to operationalize their AI initiatives.

That’s often framed as a data problem.

In practice, it’s a coordination problem across systems, teams, and rules.

Data lives in different systems. Definitions vary across teams. Context doesn’t carry cleanly from one part of the business to another. So even when the data exists, it’s not ready to be used consistently at scale.

The data-based claim here is that AI increases throughput and interaction across systems, while organizations report data readiness as a primary bottleneck. The assumption is that more interaction increases coordination requirements. The implication is that coordination, not just data, becomes the limiting factor.

If coordination still depends primarily on people, the system doesn’t actually scale. Instead, it becomes more fragile. Bottlenecks form, inconsistencies increase, and risk builds up in ways that are difficult to detect early.

At some point, relying on people to hold everything together stops working.

So what changes is not the work itself, but how the system holds together.

In healthcare, that would mean the system already understands the patient’s coverage, the procedure being performed, and the applicable rules. Approvals would happen based on context instead of manual back-and-forth. Coding would align with what actually occurred, and billing would move through the system without being reinterpreted at each step.

In energy, it would mean the system understands its current state and the constraints it must operate within, and adjusts continuously. Instead of relying on operators to constantly reconcile conditions, the system would stay within bounds by design, with people stepping in when something falls outside expected conditions.

Even in fine dining, the direction is similar. The craft remains human, but the burden of coordination shifts. The system provides visibility into what is at risk, timing adjusts dynamically, and the team spends less effort holding things together and more effort on execution.

There is a term for this in technology.

It’s called a control plane.

The definition is straightforward. Every system that produces an outcome, whether that outcome is a meal, a medical result, or reliable power, has something that holds it together. It’s the part that knows the rules, understands what’s happening right now, and keeps everything moving in sync so the outcome actually happens.

A control plane is that part of the system.

Today, in many industries, that role is largely played by people. That is why systems function as well as they do, and it is also why they begin to struggle under increased pressure.

The shift is not about removing humans from the loop. It is about changing where coordination lives.

Today, people act as the glue. They interpret, reconcile, and keep systems aligned.

Going forward, systems increasingly take on that responsibility, and people guide them.

Fine dining becomes more costly and less accessible because it cannot scale human coordination in the face of rising labor and material costs.

Healthcare doesn’t absorb the cost. It transfers the cost to the patient, and the friction delays treatment, which leads to worse outcomes.

Energy begins to feel fragile because coordination cannot keep up with the pace and complexity of the grid.

AI doesn’t create these problems, but it makes them much harder to ignore and much harder to operate around. To actually benefit from AI, humans have to move from being the system to guiding the system.

The system works today because people compensate for it.

The next generation of systems will work because they no longer have to.