Is Using Cloud-Based AI Cheaper Than On-Premise Compute? Think Again.

For most of my career I have relied heavily on cloud platforms like AWS and Azure for development environments. The model has always made sense. Spin up infrastructure when you need it. Run experiments. Shut it down when you are done. Pay only for what you use.

For traditional software development that model works extremely well.

But as we started building the development environment for our AI orchestration control plane, the economics began to look very different.

Our development workloads now include continuous experimentation with AI agents, agent swarms, LLM pipelines, Whisper transcription, document extraction, embeddings, and vector indexing. These are not occasional workloads. They are pipelines that run repeatedly while you test, iterate, and rebuild.

And that is where the cloud cost model begins to shift.

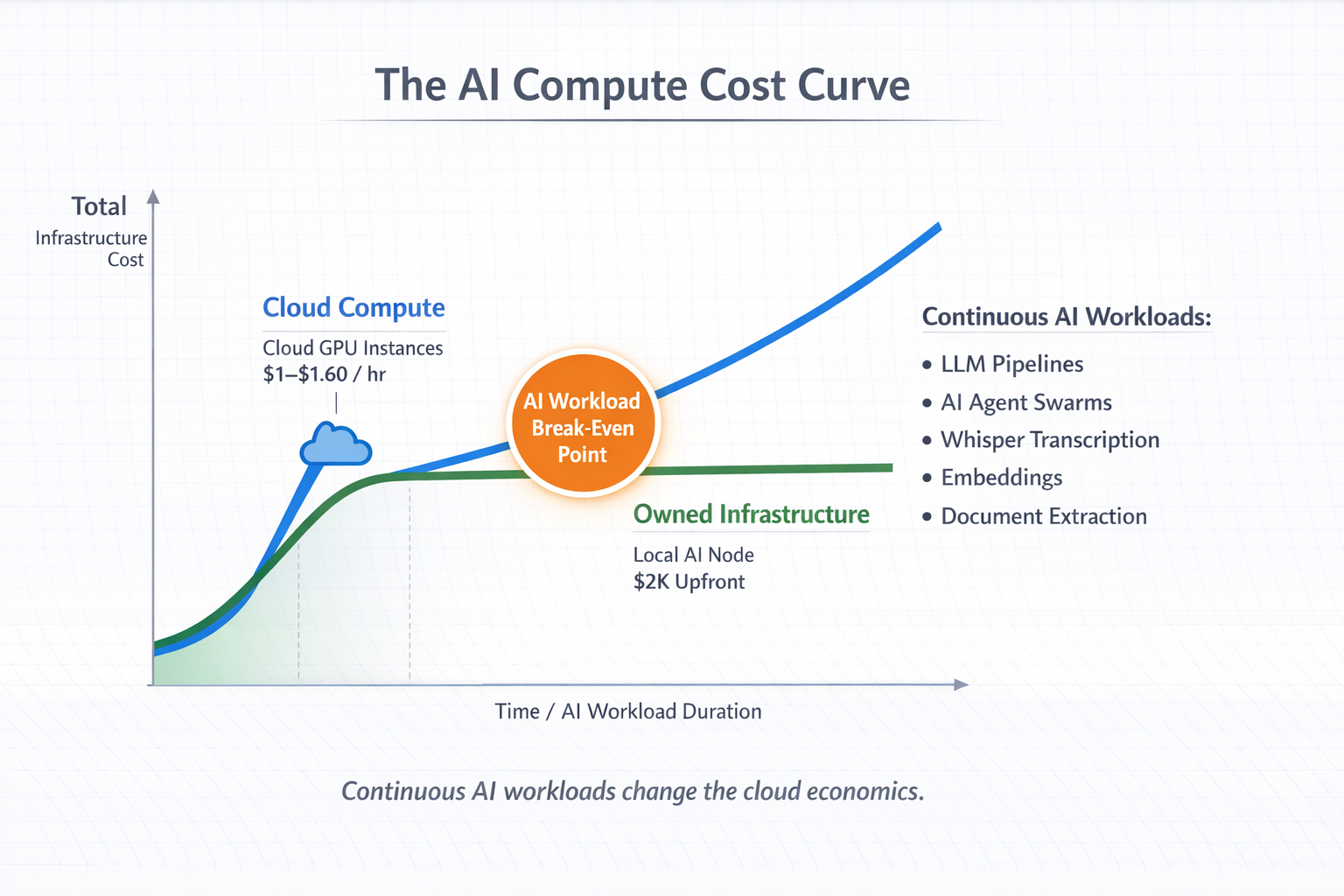

A typical GPU instance in AWS capable of running these workloads costs roughly $1 to $1.60 per hour, depending on configuration. At first glance that seems inexpensive. But development environments rarely run for just an hour or two. When you are experimenting with AI pipelines or agent swarms coordinating multiple models and tools, compute tends to run continuously.

Run that instance 24 hours a day and you are suddenly looking at $700 to $1,100 per month in compute costs.

That is when we decided to run a simple comparison.

A capable local AI workstation with a 24GB GPU, ample RAM, and fast NVMe storage can be built for around $2,000. In other words, the break-even point between cloud compute and owned infrastructure can be only a few months of regular usage.

This realization is not unique to AI workloads. In fact, the economics of cloud versus owned infrastructure have been debated for years.

One of the most widely discussed analyses was published by Martin Casado and Sarah Wang of Andreessen Horowitz in their piece “The Cost of Cloud: A Trillion Dollar Paradox.” Their research examined financial data from large SaaS companies and found that cloud costs could represent nearly 50% of cost of goods sold for some companies. They also estimated that cloud infrastructure costs had reduced public market valuations by more than $100 billion across large software firms due to margin pressure.

Casado summarized the paradox succinctly:

“You're crazy if you don't start in the cloud; you're crazy if you stay on it.”

The point is not that cloud infrastructure is bad. Quite the opposite. Cloud platforms are extraordinary tools for speed, flexibility, and global reach. But once workloads become predictable and continuous, the economic tradeoffs begin to shift.

Several companies have already demonstrated this in practice.

Dropbox, for example, famously migrated significant portions of its infrastructure away from AWS and onto its own hardware. The company reported saving roughly $75 million over two years after moving workloads to owned infrastructure.

This trend is often referred to as cloud repatriation, where organizations selectively move certain workloads off public cloud platforms once they reach sufficient scale.

AI workloads accelerate this economic shift even further.

Modern AI systems are extremely compute intensive. Training is expensive, but even inference, experimentation, and multi-agent orchestration require sustained GPU utilization. When you are continuously running agent swarms, embeddings, vector indexing, and document pipelines, the “pay as you go” cloud model can quietly turn into a very large monthly bill.

That does not mean the answer is to abandon the cloud.

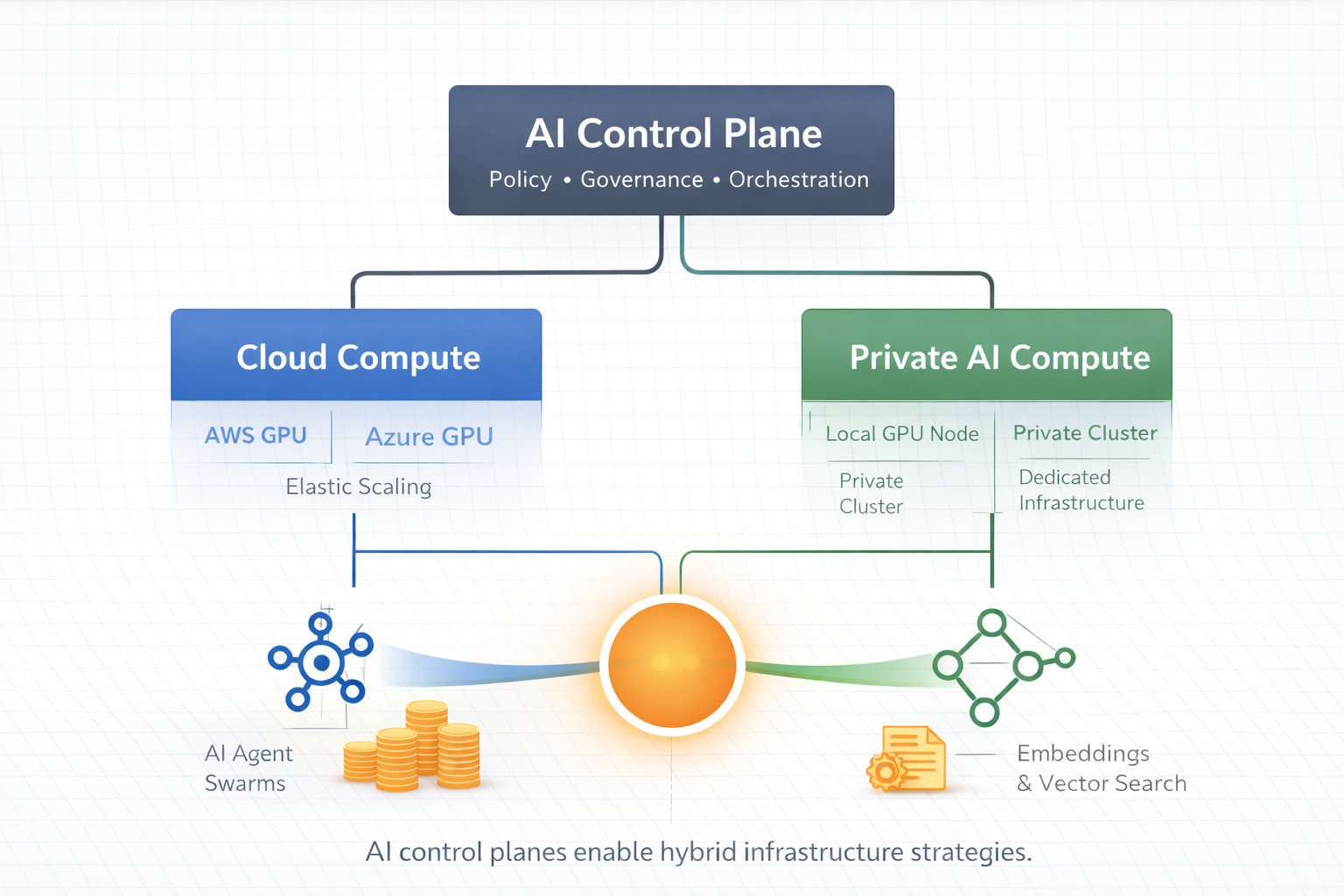

In practice the emerging architecture is hybrid infrastructure.

Cloud platforms remain ideal for:

burst workloads

global distribution

production scaling

managed services

Owned infrastructure often becomes more economical for:

continuous development workloads

high utilization compute

predictable AI pipelines

experimentation environments

agent swarm simulations

In our case, the conclusion was straightforward. For the development environment of our AI orchestration control plane, owning a local compute node dramatically reduced the cost of experimentation while still allowing us to leverage the cloud when we need elasticity.

The lesson is not that cloud is expensive or that on-premise infrastructure is always better.

The lesson is that AI is changing the compute economics again.

And the smartest architectures will increasingly combine both models, using each where it makes the most economic and operational sense.